Embedded AI

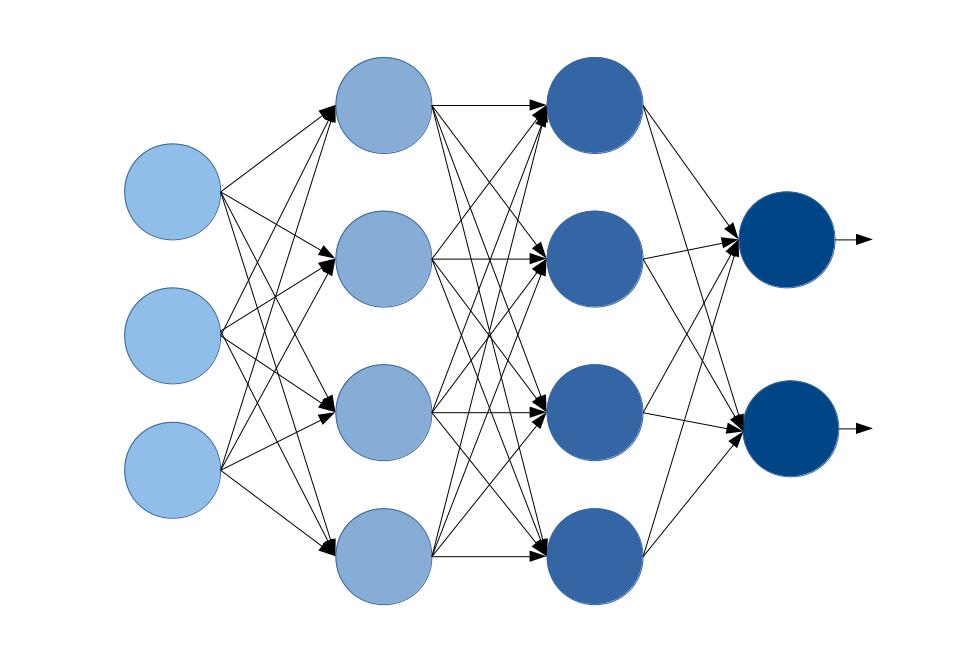

In recent years, neural networks have proven to be algorithms that can solve a wide variety of problems. They are used in particular when there is a lot of data but no formal description of the problem. The mathematical structure of neural networks allows for a high degree of parallelization and the use of bi-width optimizations. They are therefore well suited for implementation on FPGAs. This means that the advantages of AI can also be used in the embedded sector.

The process for implementing a neural network in the FPGA includes, among other things:

- Data preparation

- Prototyping and network design with python machine learning frameworks

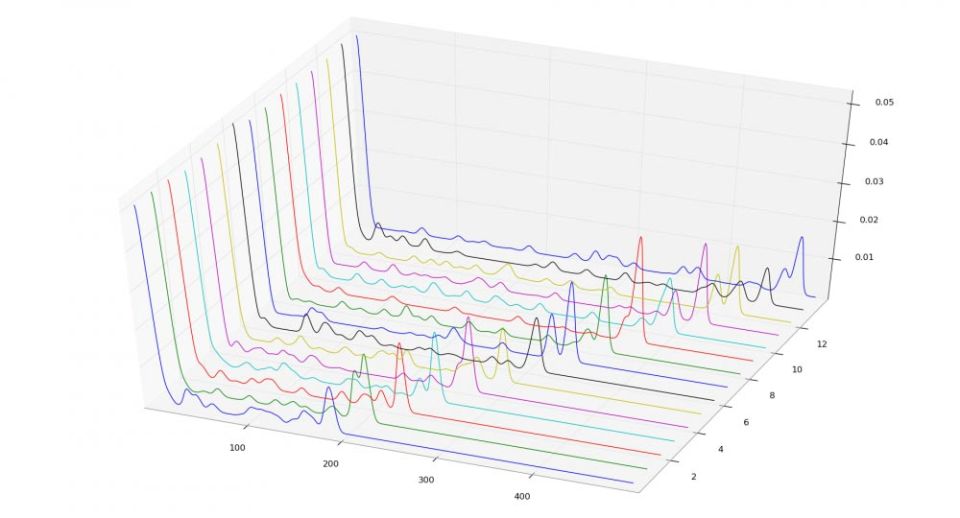

- Offline training of the network with data records

- Transferring the architecture into an FPGA design

- Interface specification

With the help of FPGAs, you can also use machine learning algorithms where low latencies and performance requirements are needed.

As part of the "Digital Innovation" programme of the state of Saxony-Anhalt, we were able to expand our expertise in the implementation of ANN in FPGAs. If you have any questions or requests regarding ANN on FPGAs, please contact us. You are also welcome to use our contact form.